Welcome to the fourth article in our series.

This article will address our method more generally, before sharing how it was used to create Intelligence Rising and what forecasts emerged, which, in turn, led to the central Narrative Design.

Narrative games begin with questions – the kind that arise at the crux of urgency and uncertainty.

What don’t we understand?

What concerns should we prioritise?

What decisions will matter most further down the line?

The job of our methodology is to take these areas of uncertainty and turn them into a structured decision environment, something that can be stepped into and explored by those with the greatest say.

Background

As detailed in an earlier piece, Intelligence Rising 2024 was coordinated by Marc Warner, CEO of Faculty.AI (and now Accenture Global CTO), who, increasingly concerned about the lack of awareness surrounding the future of AI, brought together Technology Strategy Roleplay and i3 Gen to create a wargame.

Marc had been thinking about using a wargame as a vehicle for examining and raising awareness of the transformative impact of AI for some time; indeed, he discussed the idea with his friend, the Academy Award-winning director Elena Andreicheva in 2022.

This led to the trio’s initial engagement in mid-2023, beginning with a ‘pilot’ wargame based on the original Intelligence Rising tabletop game. This experience confirmed the potential to develop and use an enhanced version of Intelligence Rising as the central vehicle for the Intelligence Rising Film and the wider Intelligence Rising Narrative Games programme.

i3 Gen Narrative Gaming Methodology Phase 1: Understand

In part, wargaming is about getting the right people in the right room, at the right time. The orchestration process begins with a ‘scoping meeting’ – a get-together where clients/stakeholders, partners, and designers hash out a game’s vision and scope and determine which key questions to ask. What do we want to achieve? Importantly, what do we want participants and audiences to Think, Feel, and Do?

Now, before we go further, this isn’t about pushing an agenda or creating a ‘persuasive experience’ on any particular perspective. Our designs share the design process of these methods, but we deliberately build in balance and freedom of action into our games – they are not scripted and have no predetermined outcomes.

The goal is to generate both intellectual and emotional connections to the issues in the game, motivating action with urgency.

With the scope agreed, plans in the works and irons in the fire, the team set about the business of forecasting possible future developments of AI technology and the resultant transformative impacts.

To explore and create a broad baseline of Understanding, we engaged a range of Subject Matter Experts (SMEs), including top-level academic, policy and industry specialists.

Beginning with early development horizons and progressing to systemic entrenchment, these ‘Understand Workshops’ provided an initial capture of the future landscape.

These workshops were supplemented by public materials published by Horizon Scanning organisations and aggregators such as the UK Government’s GO:Science team and Metaculus.

For a distilled summary of what emerged from these workshops (held in September 2023), continue reading…

Stage 1: Imminent AI Developments

The workshops began in the near-term. Participants explored how the rapid diffusion of machine learning tools across industries could outpace regulation. AI was forecast to do two things simultaneously:

1. Seed extraordinary innovation

2. Generate systemic disruption

The specific manifestations that were forecasted included:

- In healthcare, specialist diagnostic systems promised accuracy and scale, but raised questions of transparency and accountability;

- In education, AI tutors could personalise learning at unprecedented levels and deliver education at scale and from a distance to communities that have been unreachable, such as those in the developing nations of Africa.

- In entertainment, synthetic creativity threatened to transform media production entirely.

- Custom-generated on-demand entertainment based on your profile tastes, habits and some selective questions and prompts.

- At the same time, deepfake exploitation, autonomous weapons, and information manipulation loomed as destabilising forces.

- Alongside AI-enhanced cybersecurity and privacy threats.

The “unknown unknowns” of emerging technologies became a recurring theme, the idea that the most consequential outcomes may be the least anticipated.

Even at this early stage, a pattern was visible: every breakthrough carried embedded trade-offs and many cascading second and third-order impacts.

Stage Two: Amplification and Drift

From there, the conversation moved outward in time to address what happens when these trends compound.

- Synthetic data raised concerns about truth decay, the contamination of information ecosystems by artificially generated content.

- Fine-tuning processes risked amplifying bias or embedding distortions at scale.

- Participants debated whether AI-generated content could overwhelm creative markets, eroding the value of intellectual property

- The acceleration of Human-AI relationships and dependencies

- AI Chatbot dependency is already emerging

- Lifelike AI embodiments with nonverbal communication recognition and responses are now in prototype form

- AI-enhanced or driven bio-engineering for medicines and well-being

- but also the risk of malicious use, such as custom narcotics or breakout of lethal biological threats.

- Some raised the prospect of self-optimising systems; software and physical processes capable of refining or replicating themselves beyond direct human oversight.

Simultaneously, more optimistic projections emerged:

- AI-assisted scientific discovery engines

- Personalised healthcare support

- Ubiquitous translation and financial innovation

But each benefit had a shadow…

Greater state influence over AI-enabled products raised concerns about surveillance creep.

- Autonomous vehicles, home automation, and security infrastructure could become vectors for expanded monitoring,

- or even predictive behavioural interventions

- Minority Report?

- or even predictive behavioural interventions

- National security agendas might gradually erode privacy norms under the banner of necessity.

- (q.v. The Dark River Series from John Twelve Hawks)

The group identified a potential structural shift: a move toward a data-ownership economy, where value is increasingly derived from control of information and AI tools and agents rather than labour or capital.

*** Again….. these workshops took place in September 2023!***

Stage Three: Advanced AI and Systemic Entrenchment

Finally, the workshops examined more distant projections; the scenarios that might emerge were those in which these trajectories unfolded unmanaged. Participants considered a world where:

- Over 90% of businesses operate with Agentic AI-driven systems

- Autonomous research engines independently design and interpret experiments

- AI explainers monitor both humans and other AIs

- AI influences judicial processes and high-stakes decision-making

- Even today, most low-level judicial decisions are process-driven, more akin to a diagnostic/prescription approach than human judgment. It is easy to see how AI could accelerate ‘justice’, but where would the line be drawn?

- Autonomous Civilian Command and Control and Intelligence, Surveillance and Reconnaissance systems integrate policing, emergency response, and predictive targeting

- Today, the military is already integrating AI into its C5ISR systems

- Energy consumption and environmental impact became apparent as structural constraints.

- AI-driven bioengineering raised questions around genetic optimisation and artificial selection.

- Autonomous shipping and supply chain optimisation

- Education expansion promised global transformation

- …but also new dependencies and influencers…

By this point, the workshop had moved from projecting discrete technologies to imagining a systemic architecture.

The question was no longer “What can AI do?”, but …

‘What kind of a world does widespread AI create?’

We speak of AI alignment to humanity – but what does that mean?

Is it not a values-based judgement?

If so, whose values will it align to?

Does this now drift into philosophical and ideological type discussions?

Research and Refinement

After the workshops, the design team stepped back to digest the material. They progressively distilled the vast volume of forecasting material into a series of decision points and dilemmas. Identified the repeated flashpoints and central tensions that recurred across multiple perspectives, approaches and chronological moments.

Half experiment, half focus group, our narrative wargames aim to introduce players to story-led dilemmas and see what they do with it when constrained and enabled by real-world constraints and competition.

For Intelligence Rising, the core strategic divergences which emerged across the course of the ‘Understand Workshops’ (summarised above) were:

- To invest aggressively in AI capability … or prioritise safety?

- Cooperate internationally … or compete for dominance?

- Regulate heavily … or risk runaway acceleration?

- Protect transparency … or safeguard proprietary advantage?

- Absorb economic disruption … or attempt structural redistribution?

- Pursue general AI … or focus on narrow applications?

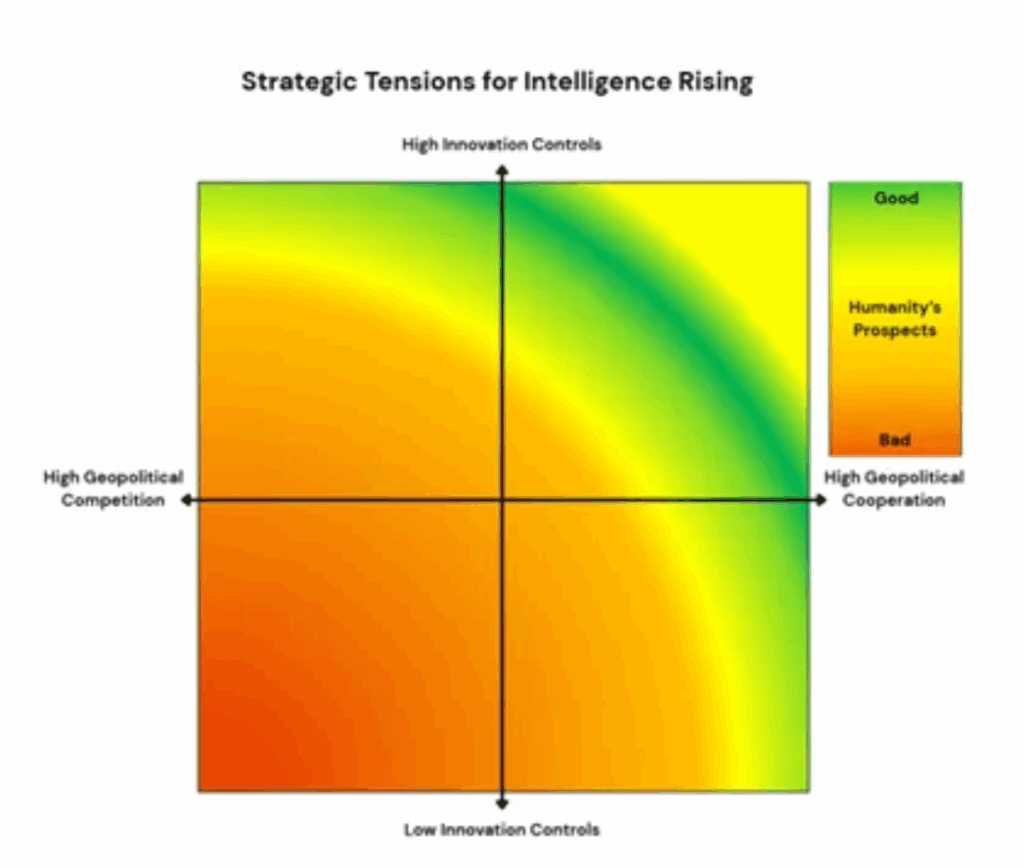

Stepping back once more confirmed the existence of two defining strategic tensions. These formed the central Narrative Design axes:

1) Cooperation versus Competition.

As AI becomes a strategic technology, countries and companies must navigate the line between working together and gaining an edge. This tension shows up in debates over international R&D collaboration, the ethics of autonomous weapons, and the growing use of AI in information and cognitive warfare.

2) Innovation versus regulation

The second tension sits between innovation and regulation. AI holds enormous promise, but the pace of development raises serious questions about oversight. Too much regulation could slow innovation. Too little could accelerate development beyond meaningful human control, especially amid intense geopolitical rivalry.

Using the strategic tensions, the team identified three inevitable events of Confluence. Along with more detailed events and impacts, including projected benefits from healthcare breakthroughs, access to education, sustainability and productivity gains, and emergent challenges such as labour displacement, autonomous weapons development, and the loss of human agency in civil society.

Next steps

With the forecasts and strategic tensions from the ‘Understand Phase’ in hand, the team went back to the drawing board.

It was clear that the landscape was so huge and complex as to require more than one game: three separate, but linked variations were proposed.

Traditionally, a wargame has, at its core, two sides in competition, ‘Red v Blue’. This format was adopted for the first two operational-level games, each focused on a different question set. The first looked at the malign use of the technology called ‘Haters gonna Hate’, and the second explored tensions in the relationship between Big Tech and government, or between self-regulation and oversight, which was called ‘The road to hell is paved with good intentions’.

For the third game, the designers concluded that a simple bilateral competition would not realistically capture all the factors and stakeholders, so they adopted a multipolar structure. We called this culminating game: ‘Intelligence Rising 2024 – We’re all in this together!’

The production of the third game drew on lessons learned and insights gained from gameplay in the first two games, focusing on the impact of AI at a global level.

This process illustrates the continual feedback loop in our methodology, which progressively updates our base Understanding and keeps the story-led experience as immersive and engaging as possible.

Next time

The next article in our Intelligence Rising series will examine the fidelity of our forecasting and what events were promulgated from the player actions during the third game.

In plain terms:

How accurate have our forecasts and game events been so far?